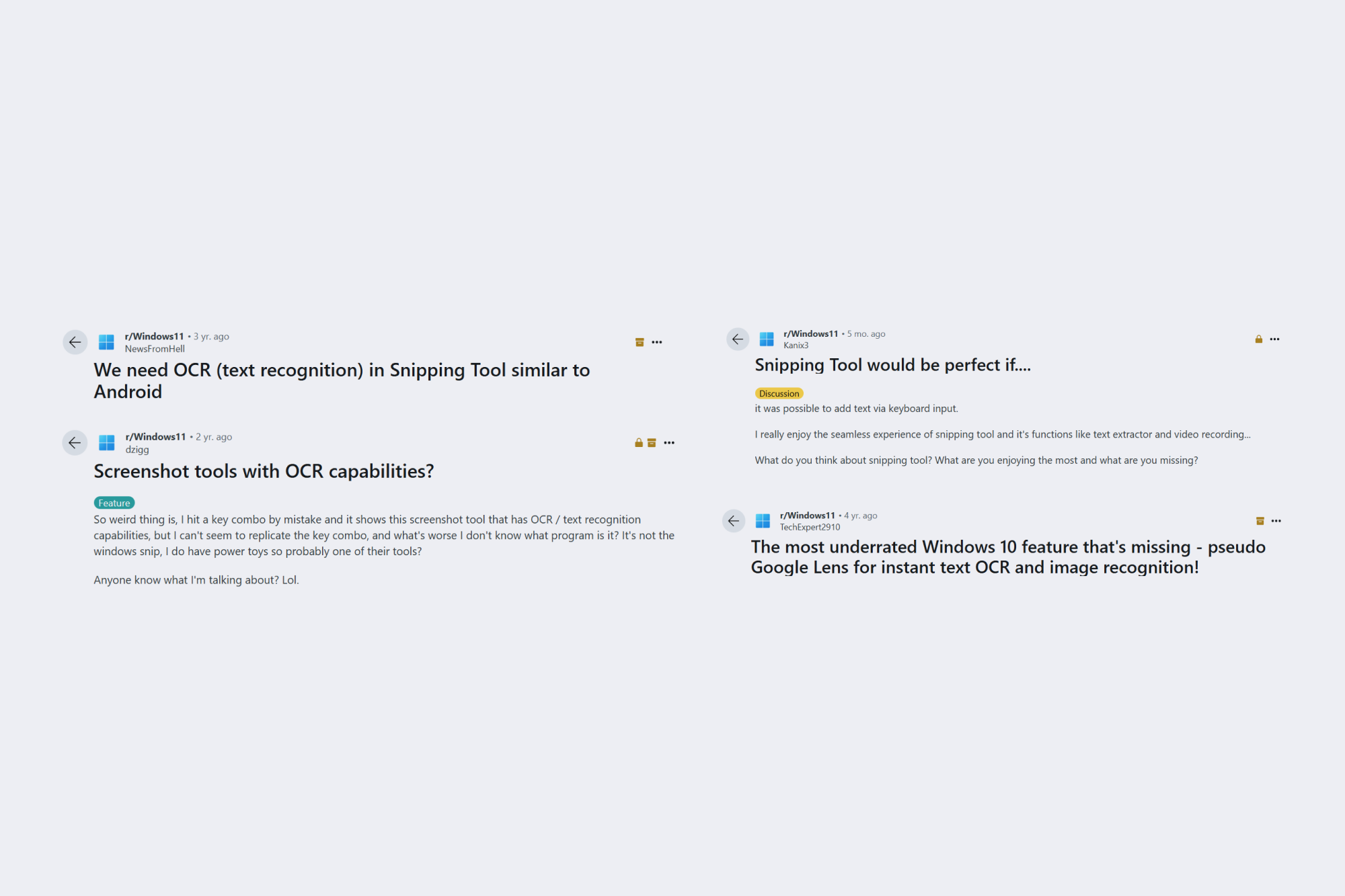

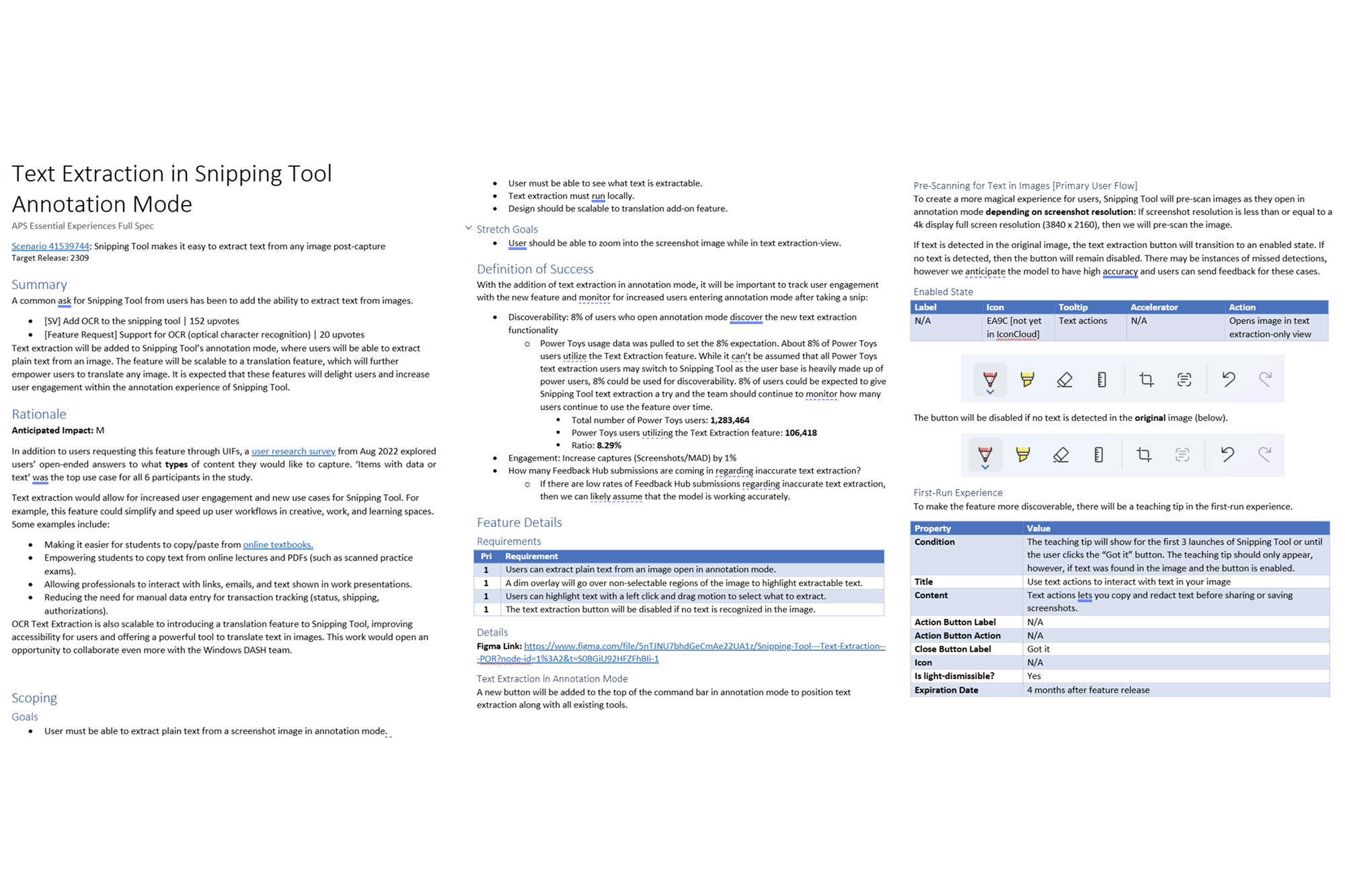

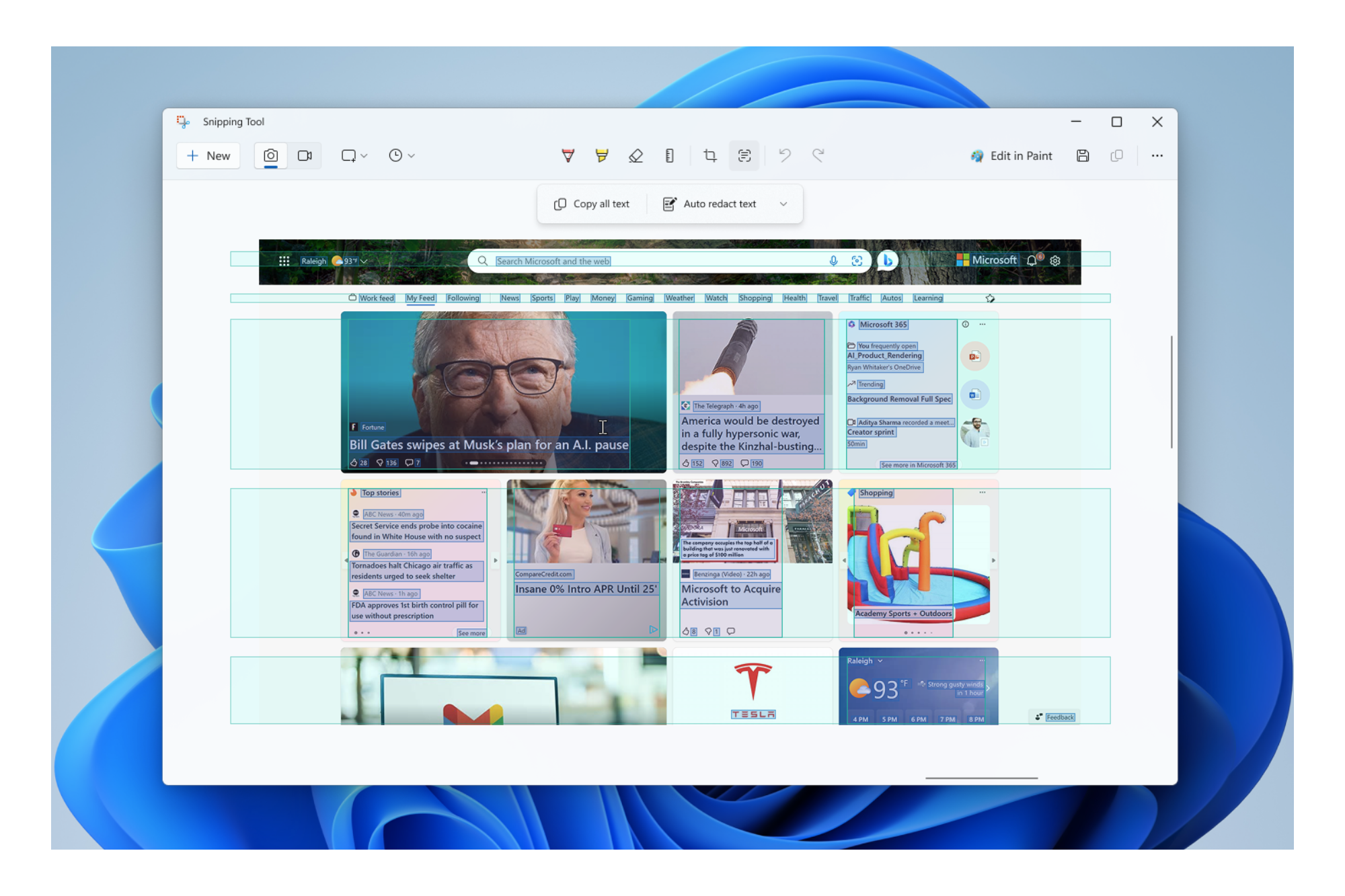

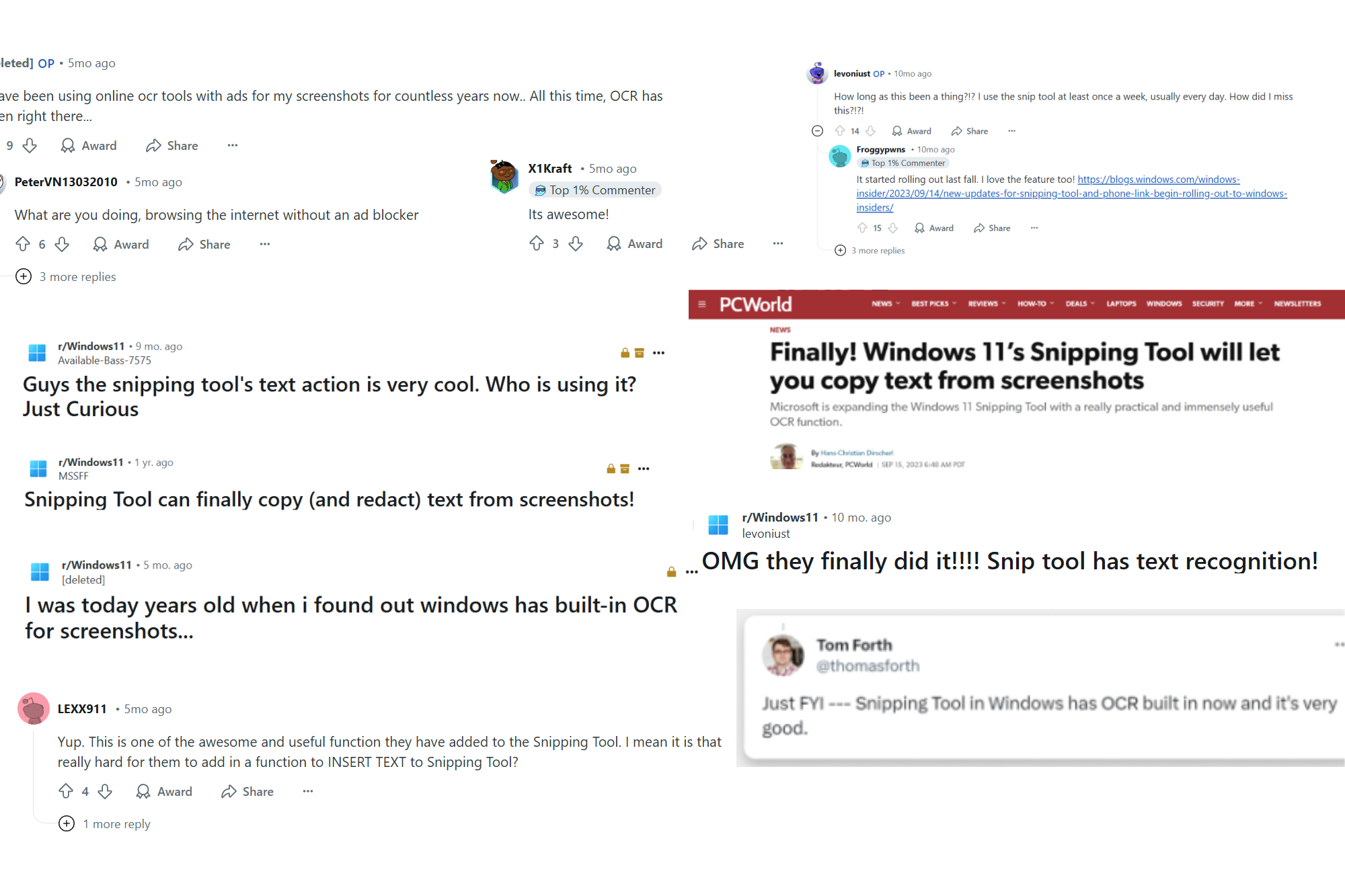

User pain points

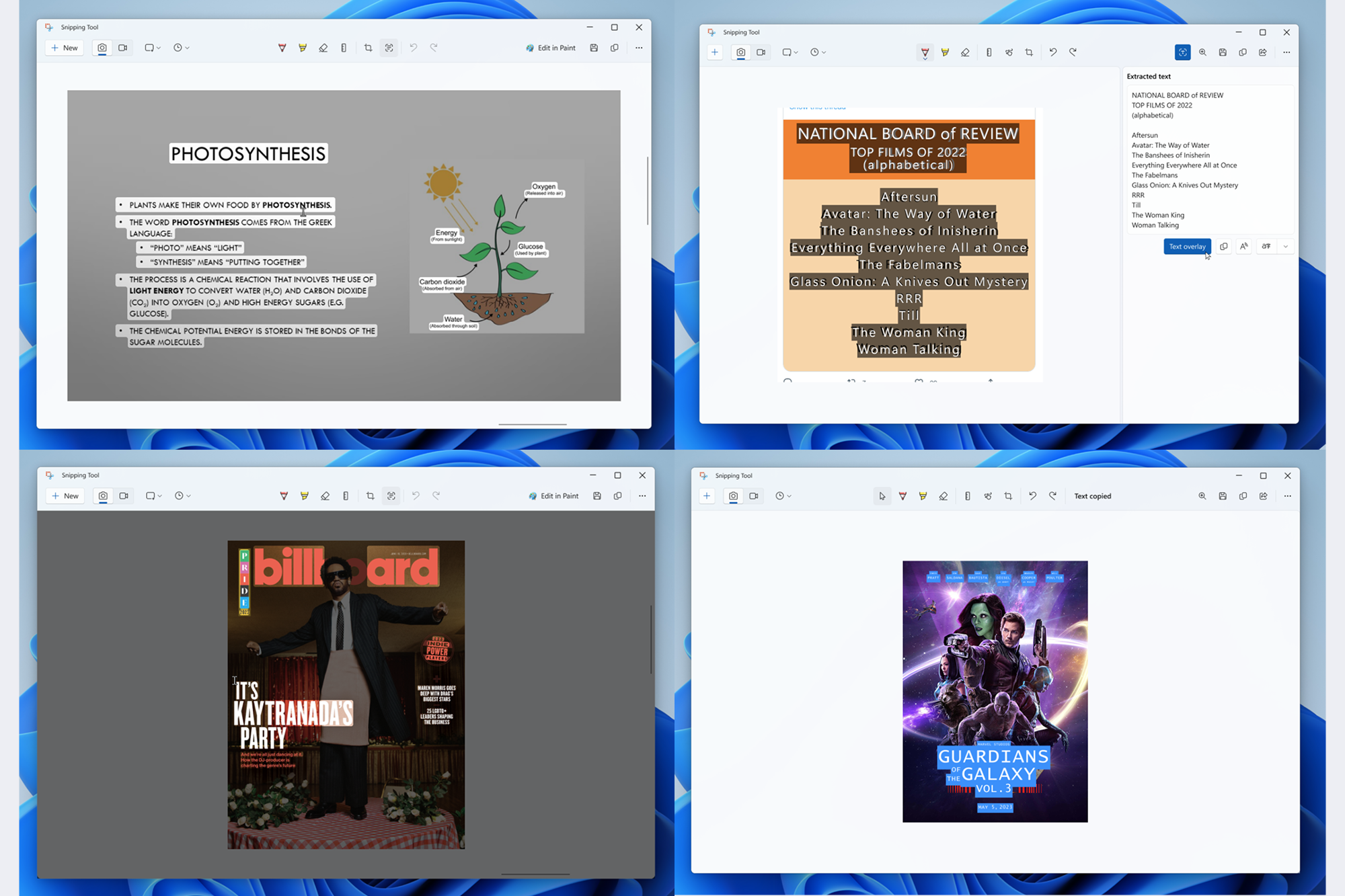

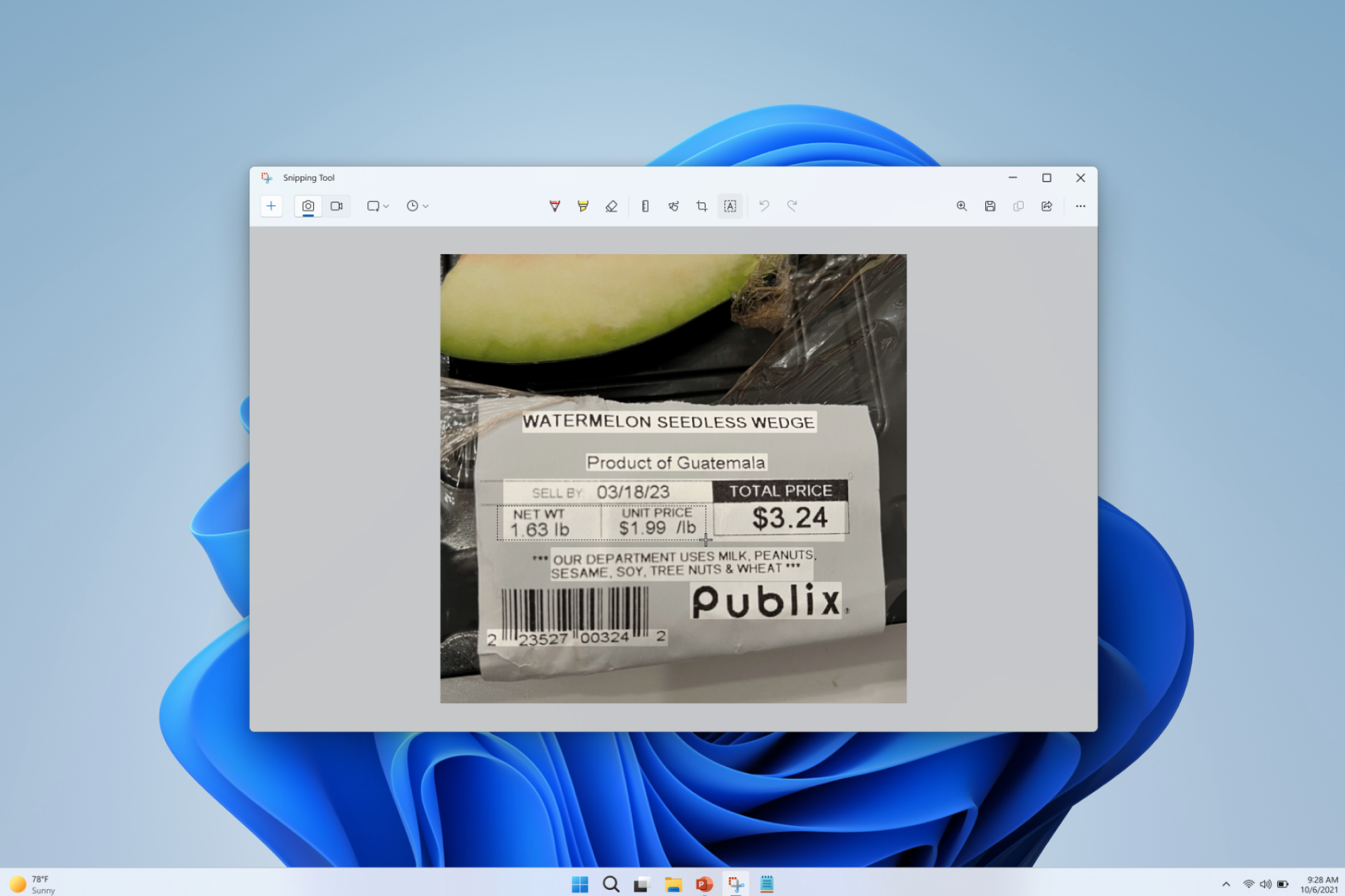

A common ask for Snipping Tool from users has been to add the ability to extract text from images. System initiated user feedback: Add OCR to the snipping tool - 152 upvotes, Feedback Hub feature request: Support for OCR (optical character recognition) - 20 upvotes.